we all know testing is valuable, but almost all dbt projects still underinvest in it. i am guilty of this, shipping the model, promising i will add tests later, then moving on to the next urgent request.

that pattern feels fast in the moment, but it is expensive over time. dbt tests are one of the easiest ways to protect trust in your data, and the setup cost is usually smaller than people expect.

quick answer#

dbt tests are assertions about your data that run inside your transformation workflow. they verify assumptions like uniqueness, non-null keys, valid categorical values, and referential integrity. when a test fails, dbt surfaces the exact failing records so you can debug quickly. if you are new to the feature, start with the official dbt data tests documentation and add a few high-signal tests to your most consumed models first.

who this is for#

- analytics engineers who already build dbt models but still rely on manual spot checks

- data teams that have recurring data quality incidents in dashboards or reports

- anyone who wants a practical starting point instead of a perfect testing framework

why this matters#

the cost of bad data is usually delayed, not immediate. a broken metric can sit in production for days before someone notices, and by then that number has already been used in a deck, decision, or leadership update.

the frustrating part is that most of these issues are predictable. duplicate primary keys, null foreign keys, unexpected status values, and invalid date ranges are all common failures. dbt tests can catch these early, near the model that introduced the issue.

i also think testing helps teams move faster, not slower. when tests are in place, i can refactor a model with more confidence because i have a safety net. without tests, every change feels risky and review cycles become slower because everyone is relying on intuition.

step-by-step#

1) define the starting point#

pick one model that is heavily consumed, for example an order fact table or a customer dimension. identify three assumptions that must always be true:

- the model key is unique

- important keys are never null

- status fields only contain known values

then encode those assumptions directly in your yml.

2) apply the change#

start with generic tests in your schema file.

version: 2

models:

- name: fct_orders

columns:

- name: order_id

tests:

- not_null

- unique

- name: customer_id

tests:

- not_null

- relationships:

to: ref('dim_customers')

field: customer_id

- name: order_status

tests:

- accepted_values:

values: ["placed", "shipped", "cancelled", "returned"]this single block gives you strong baseline coverage:

not_nullprotects required fieldsuniqueprotects grainrelationshipsprotects joins and referential integrityaccepted_valuesprotects enum-like business states

next, add one singular test for a business rule that generic tests cannot express cleanly.

-- tests/orders_non_negative_amount.sql

SELECT

order_id,

order_amount

FROM {{ ref('fct_orders') }}

WHERE order_amount < 0this test fails only when the query returns rows. singular tests are ideal for custom rules like range checks, cross-column logic, and impossible combinations.

3) validate the result#

run tests in a tight loop while you are developing:

dbt test --select fct_ordersfor a broader gate in CI or before merging, run:

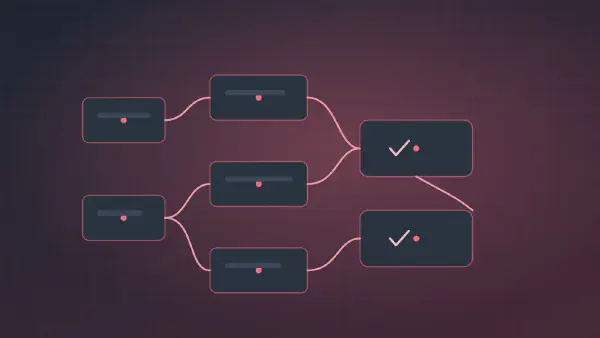

dbt build --select fct_orders+dbt build runs models and tests together, which is useful when you want to validate both transformation logic and data quality in one pass. the + after the model name tells dbt to also run any downstream models according to the dag to make sure dependencies also pass.

a practical prioritization rule#

when time is limited, i prioritize tests in this order:

- key integrity on high-consumption models (

uniqueplusnot_null) - foreign key integrity (

relationships) on joins that power dashboards - controlled fields (

accepted_values) where business logic depends on a finite set of values - one custom singular test for the highest-risk metric or business rule

this sequence catches a large share of real incidents with minimal setup.

faq#

what should i test first in a mature project with little coverage?#

start where breakage is most expensive, not where modeling is most elegant. choose one or two heavily consumed models and add key integrity plus relationships first. then add one singular test for the business rule that has caused the most historical pain.

do dbt tests slow down delivery?#

they add some upfront work, but they usually reduce cycle time later. test failures are cheaper during development than after release, and tests make refactors safer because you can verify assumptions continuously instead of rediscovering issues in production.