quick answer#

dbt snapshots provide a native way to track slowly changing dimensions over time. by migrating from custom merge statements to native dbt snapshots, you can simplify your codebase, rely on built-in history tracking, and ensure your downstream models always have access to point-in-time records.

who this is for#

- audience: data engineers and analytics engineers using dbt

- prerequisites: basic knowledge of dbt models, sql, and data warehousing concepts

- when to use this guide: when you need to track historical changes to mutable source records and want to move away from manual merge logic

why this matters#

tracking historical changes is a common requirement in data warehousing. building custom merge logic to handle inserts, updates, and history tracking is error-prone and difficult to maintain. dbt snapshots handle the heavy lifting of history tracking out of the box. this ensures you do not lose historical context when source systems overwrite data.

moving from merge to snapshot#

recently, i migrated several historical tables from a custom merge strategy to native dbt snapshots. the previous approach relied on complex merge statements that manually checked for changes and inserted or updated rows to maintain history. this was difficult to read and even harder to debug.

by adopting native dbt snapshots, the logic became declarative. instead of writing the exact update and insert commands, i only needed to define the source query and configure how dbt should detect changes. the downstream consumer views then filter the snapshot output to return the current row or a point-in-time record.

the core shift in thinking#

when using snapshots, your snapshot definition should remain source-representative. do not apply business date-window filtering in the snapshot definition itself. instead, capture the raw history and apply your logic for which rows to return in downstream consumer views.

for example, to get the current row in a downstream model, you filter using the sentinel value:

select *

from {{ ref('my_snapshot_st') }}

where dbt_valid_to = '9999-12-31 23:59:59'to get a freeze record for a specific point in time, you derive a freeze timestamp and filter:

select *

from {{ ref('my_snapshot_st') }}

where dbt_valid_from <= freeze_ts and dbt_valid_to > freeze_tsbasic example#

here is a basic example of a dbt snapshot using the check strategy. this snapshot tracks changes to a practice affiliation table.

{% snapshot practice_affiliation_st %}

{{

config(

target_schema = 'snapshots',

strategy = 'check',

unique_key = ['fmno', 'cycle', 'committee', 'hierarchy'],

check_cols = 'all',

hard_deletes = 'invalidate',

dbt_valid_to_current = "to_timestamp_ntz('9999-12-31 23:59:59')"

)

}}

select

fmno,

cycle,

committee,

practice_name,

type,

hierarchy

from {{ ref('source_practice_affiliation_v') }}

{% endsnapshot %}configuration options#

dbt snapshots offer several configuration options that control how changes are detected and recorded. you can read more about these in the official dbt snapshot documentation.

here are the key options and what they control:

- target_schema: the schema where the snapshot table will be built

- strategy: determines how dbt detects changes, with the two main options being timestamp and check

- unique_key: the primary key of the record, which can be a single column or a list of columns for a composite key

- check_cols: used with the check strategy to specify which columns to monitor for changes, accepting a list of column names or the word all

- updated_at: used with the timestamp strategy to specify the column that indicates when the source row was last modified

- hard_deletes: controls how dbt handles rows that disappear from the source, such as setting it to invalidate to close the current row when a key is no longer present

- dbt_valid_to_current: overrides the default null value for current records, allowing you to set a far-future date to make downstream filtering easier

timestamp vs check strategy#

the choice between timestamp and check strategies is critical.

use the timestamp strategy when your source has a reliable updated column that changes whenever the row changes. dbt compares the source timestamp to the snapshot timestamp to decide if a new version is needed.

use the check strategy when you do not have a reliable updated timestamp, or when you want to detect any change in a specific set of columns. dbt compares the actual values of the check columns between the source and the current snapshot row. if any checked column differs, dbt closes the current row and inserts a new version.

in my recent work, i found that the check strategy with all columns checked and a composite unique key was the most robust approach for sources where the updated timestamp was synthetic or not authoritative.

gotchas and lessons learned#

migrating to snapshots surfaced a few important lessons:

- upstream scope gating: if your upstream source query includes filters that remove keys, and you have hard deletes configured to invalidate, dbt will intentionally close the current rows for those missing keys

- composite keys: dbt fully supports composite unique keys, and passing a list of columns ensures that dbt tracks history at the correct grain

- duplicate source rows: snapshots expect the source data to be unique at the unique key grain, so if your source contains duplicate keys, the snapshot will fail or bloat

- defensive deduplication: in some cases, i had to add a defensive qualify row number guard in the snapshot definition to collapse known duplicate-key source rows before dbt processed them

- sentinel values: using a sentinel value for current rows instead of null makes downstream queries much cleaner, allowing you to use an equals operator instead of checking for nulls

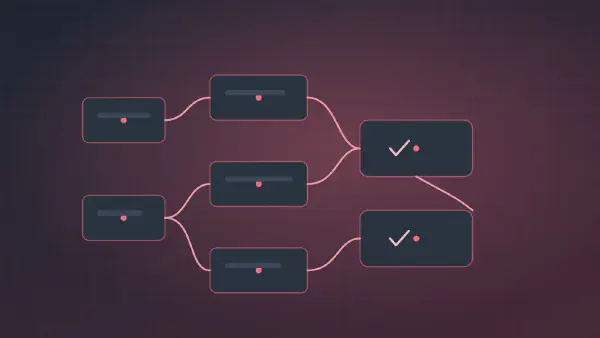

deployment and automation#

snapshots are not updated automatically when you run your standard dbt build commands. they require a dedicated command: dbt snapshot.

if you do not automate this, your history tracking will be manual and prone to gaps. to ensure continuous history capture, you must schedule the snapshot command to run on a regular cadence.

in a production environment, this usually means setting up a continuous integration workflow or an orchestrator task. for example, you can use automated workflows to run snapshot tags on daily, hourly, or monthly schedules.

a typical automated workflow might look like this:

- a scheduled trigger fires the workflow

- the workflow checks out the repository and sets up the dbt environment

- the workflow executes the snapshot command for specific tags

- dbt connects to the warehouse, compares the source data to the existing snapshot tables, and applies any necessary inserts or updates

by decoupling the snapshot schedule from your standard model runs, you can capture history at the exact frequency your business logic requires.